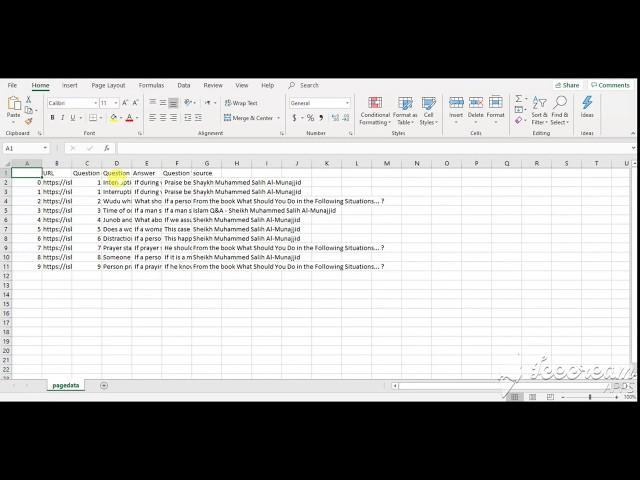

Web Scraping / Data Extraction using Python and Saved in CSV file

Комментарии:

tanks

Ответить

I need your help

Ответить

Who is 'HOURIS'? Do you think there(in Heav) will be something like this?🙄🤔

Ответить

Thank a lot, you explained it really very well. This is a much-needed content for ML learners like me. Thank you so much!

Ответить

Are you in India ?

Ответить

Simple and awesome explanation

Ответить

Mam code please

Ответить

how to install requests package. please tell me

Ответить

Iam getting an error while iam trying to import beautifulsoup from bs4

Ответить

thank you so much you explain very well.

Ответить

JazakAllahu Khair

One thing I want to know, if there are 200 links, from where I have to scrape data like Heading and Paragraphs, this process will take a long time, what will be the process to increase the speed of execution?

Beautifully explained.... Please upload more such content

Ответить

Straight to the problem, thanks you so much

Ответить

This is perhaps most valuable video for me till now!

Thanks for this Mohtarma!

way of explening is very well mam thank u so much mam. pls make such good content . we love you mam 💓💓💓

Ответить

Thank you this was very helpful but can you please help me in extracting data from multiple url actually I have an Excel file with url i also use your method but it doesn't work is there any other method

Ответить

I was wasted my two days, finally found your video, I understand just in 15mins. thank you

Ответить

Love you mam for this very useful knowledge.

Ответить

how to extract text (if possible specific section text ) from multiple resume extension as doc,docx, pdf and save all into a new csv file. the csv file should have a format like first filename,text,resume category.

Ответить

I got a data extraction task from a company and dont know how to do it and i did it with chatgpt in just 15 min 😂😂😂

Thanks to gpt😂😂

thanks i refered your video and it helped me alot ,thankyou

Ответить

I want a solution for 1 task can you provide it???

Ответить

for economic times how can we do that

Ответить

Madam pahila video Kaha hai ?

Ответить

Urdu he rhne de

Ответить

I have been trying it for two days. Then I found your video, well explained. This is the content we’ve all needed. Thanks so much!

Ответить

Asslam walaikum,

Saw your video and it is very easily explained. Thank you so much

I am getting error 406

can u help

This video is gold , Extremely well explained video and language used is very common. I understood the code in one go. Thank You for this video.

Ответить

I am new here

Voice Issue...

can u plz make a tutorial vdo on regex expression and data cleaning :)

nice explanation though:)

my lucky number is 525 and am the subscriber No.525....

Ответить

I have a different types of urls in excel file how to srcap data from those urls

Ответить

Thank you mam, you save my day. exactly what I needed.

Ответить

such a nice video. tq so much

Ответить

Hopefully you provide code

Like in GitHub .

Super yaar, thank you soo much for the valuable content. Can you please do it fir a shopping website in English???

Ответить

Sister when I am putting the command, I am getting null values like [ ] despite the fact I am using right class. Please guide.

Ответить

But, i got a error like no module named bs4

and when i try to install bs4 in colab notebook it is showing command not foulnd(! pip install bs4)

but mam wah kaise use kare search option ......agar kuch pass krna h search option me woh kaise request send kare

Ответить

Amezing

Ответить

please, make a video how to scrape multiple pages at once after then how can we save all of data into csv and xcel and mysql database ,plz,plz plz

Ответить

Thank you so much 😍😍👌

Ответить

thank you so much mam but last code of for loop is not visible please i need of your help

Ответить

When I run requests.get()

I got

<response [406]>

How to solve this mam

Hi it’s very good

I just want to know how get anchor tag value, href not required

Hlw mam can you share your telegram link , i need your help please try to understand my problem

Ответить

Beautiful explanation . please make video on more methods on web scrapping using python . also how to convert data like column into rows , how to understand html.

Also yours voice is very heart touching