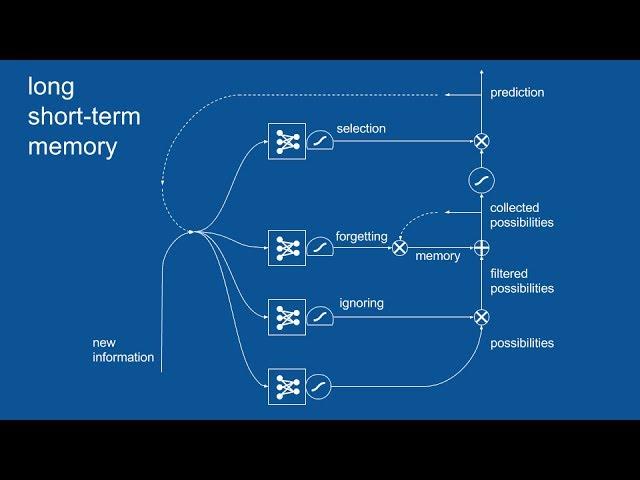

Recurrent Neural Networks (RNN) and Long Short-Term Memory (LSTM)

Комментарии:

Thanks Brandon

Very cool!

That was an amazing explanation. Very clear with the help of images and symbols.

Ответить

Subtitles are auto-generated with lots of mistakes.

Ответить

Thanks a lot for this straight to the point explanation. Really learnt a lot.

Ответить

This is simply amazing and so clear.

Ответить

Watching in 2x speed, even then it makes perfect sense! 🙏

Ответить

Amazing Explanation!!

Ответить

The best Intro To RNNS and LSTMs I have seen!

Ответить

im a student major in data science from taiwan and i wanna say thank you, i got more to know about LSTM after watching ur video. Much appreciate sir!

Ответить

Waffles for dinner? yuck

Ответить

Hi , i need some help here

why we decide to make the next hidden state = the long memory after filter it ? why not the next hidden layer not = the long memory (Ct)

subscribed! TYSSM

Ответить

Exceptionally good, the best I've seen in this subject.

Ответить

I don't usually place any comment like this, but this is extraordinary :) So easy to understand :) Thank you :)

Ответить

Superb! Thanks!

Ответить

The best video on LSTM and RNN I've seen. Thank you so much!

Ответить

Are the neural network units in an LSTM trained independently or simultaneously through backpropagation? I am assuming the latter and the functions of each unit are simply learned through their placement. For example, the "selection" NN unit is just named that from its placement and we don't do anything special during the training phase to make it a "selection" unit.

Ответить

you lost me at former and latter

Ответить

Hey Thanks Bradon, Complexity put forth in Layman's language, just loved it!!!

Ответить