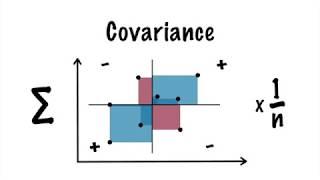

Visual Explanation of Principal Component Analysis, Covariance, SVD

Комментарии:

Would love to request an in person version

Ответить

Sexy

Ответить

Excellent video, thank you!

Ответить

Gotta echo the other comments here. Very succinct and understandable. You brought in the linear algebra without getting bogged down in it. Folks that don't have a strong grasp of that subject will still probably be able to get the main points of your presentation. Nicely done!

Ответить

PS: Video is targeted at people who already have a deep knowledge of what the video is trying to explain.

Ответить

thank you for this amazing video

Ответить

I have always dreaded statistics, but this video made these concepts so simple while connecting it to Linear algebra. Thank you so much ❤

Ответить

Why do you stop making videos?

Ответить

I thought PCA was a hard concept. Your video is so great!

Ответить

thank you for this amazing and simple explanation

Ответить

Thank you! very nice video, well explained!

Ответить

Great concise presentation, much appreciated! 👍

Ответить

Good job, no wasted time

Ответить

Awesome explanation!! Nobody did it better!

Ответить

Around the minute of 1.36, you said "we divide by n for covariance", but we divide by n-1, instead. Please, do check on that. Thanks for the video. Maybe, I sohuld say estimated covariance has the n-1 division.

Ответить

Very nice video. I plan to use it for my teaching. What puzzles me a bit is that the PCs you give as an example are not orthogonal to each other.

Ответить

Thank you. It was beautiful

Ответить

Great video! Can anyone tell how she decided that PC1 is spine length and PC2 is Body mass? Should we guess (hypothesize) this in real world scenarios?

Ответить

Very Nice..pls keep posting

Ответить

Good lecture

Ответить

Graphical interpretation of covariance is very intuitive and useful for me. Thank you.

Ответить

Good explanation

Ответить

great explanation

Ответить

I do understand that eigenvalues represent the factor by which the eigenvectors are scaled, but how do they signify “the importance of certain behaviors in a system”, what other information do eigenvalues tell us other than a scaling factor? Also, why do eigenvectors point towards the spread of data?

Ответить

No one explains why they use covariance matrix. Why not use actual data and find its igen vector/igen values. I have been watching hundreds of videos books. No one explains that. It just doesn't make sense to me to use covariance matrix. Covariance is very useless parameter. It doesn't tell you much at all.

Ответить

Believe it or not, I've been wondering a lot about the concept of covariance because every video seems to miss the reason behind the idea. But I think I kind of figured it out today before watching this video and I drew the same exact thing that is in the thumbnail. So I guess was thinking correctly : ))

Ответить

thanks for this simple yet very clear explanation

Ответить

babe var(x,x) makes no sense. either you say var(x) or cov(x,x)

Ответить

Best PCA Visual Explanation! Thank You!!!

Ответить

Plz do more videos

Ответить

Great clarity. You clearly understand your stuff from a deep level so it's easy to teach.

Ответить

poggers explination thankyou

Ответить

Thank you, Ma'am!

Ответить

Wow, that was quite good explanation.

Ответить

beautiful, thanks a lot!

Ответить

Great explication. Thank you.

Ответить

Congratulations Emma, your work is excellent!

Ответить

Hello Emma, Great job! Very nicely explained.

Ответить

Great explanation!

Ответить

nice job was always kinda confused by this.

Ответить

I just love the voice🙄😸

Ответить

Thank you for this great lecture.

Ответить

investigate hedge/hogs

Ответить

This video needs a golden buzzer.

Ответить

Great video, thank you!

Ответить

Very nice explanation!

Ответить