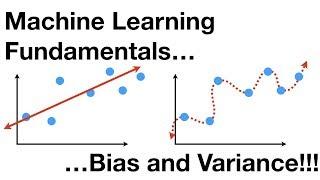

Machine Learning Fundamentals: Bias and Variance

Комментарии:

good good good good

Ответить

Don't mind if this is my ring tone, drives the confusion away, BAM...

Ответить

Wonderful clarity. Well done!

Ответить

Does the bias apply to all data or only the test data? Same question for Variance.

Ответить

khouya sir t7wa

Ответить

A silly doubt, please try to clarify if possibe. You said After a certain weight, mice don't get any taller. That is not a necessary condition practically. Because, Let's take the certain limit as 80kg . Some people even grow taller after 80kgs. So are you asking us to assume a dataset where this is possible?

Ответить

good good great

Ответить

I has a feeling if you're Japanese you'd be a great haiku poet

Ответить

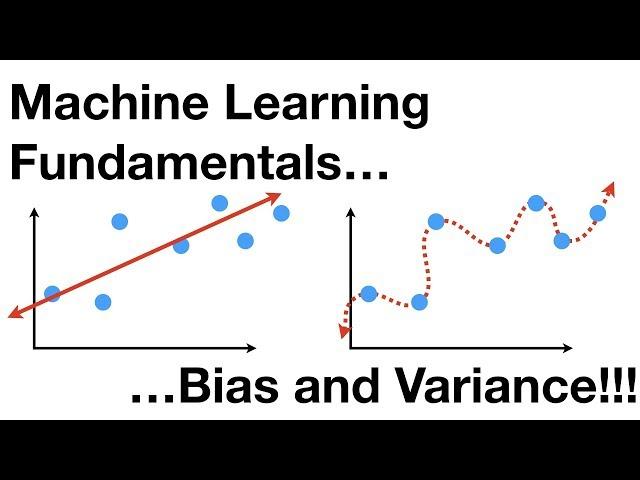

the inability for a machine learning method like linear regression to capture the true relationship is called bias. Large amount of bias means great incapability to capture the data trend into the model.

The difference in fits between data sets is called variance.

We can compare how well the Straight line and the squiggly line fit the training set by calculating their sums of squares(sqaure the distance between fit line and data and add them up)

Wtf bro?! How in earth do you answer all this comments?

Ответить

StatQuest so cool man

Ответить

The way you explain it , priceless . Thank you so much.

Ответить

man

I love how you explianed it so easy to understand like butter 🔥🔥🔥🔥

Just cool

Ответить

One way I remeber this is:

Bias : It's the loss associated with training

Variance: It's the loss associated with testing

If your training loss value is low, it means you have low bias and vice versa.

If your testing loss value is low, it means you have low variance and vice versa.

the intro's are amazing, and so are the videos, thanks!

Ответить

I came here after taking a grad level course, but this simple explanation often stays longer in the mind :)

Ответить

this learning is fun I like the way you create vidoes.

Ответить

I'm amazed how many bams I've reached just in a couple of hours. Your videos have been enlightening, thank YOU very much!

Ответить

Double BAM !!

Ответить

Very simply and amazingly explained, saw many tutorials but this was by far the best. Thank you :)

Ответить

THANK YOU SO MUCH!

Ответить

OMG, Pls join as a prof in my university hehe

Ответить

Thanks!

Ответить

- Bias is the number showing ability of fitting the traning set, the smaller bias is, the better it fits the traning set

- Variance is the number of ability of fitting the traning set, ... THE SAME

- we want to find sweet spot where bias is low and variance is low

- there are three methods: regularition, boosting, bagging

when you say high variance on different datasets, does that mean different test datasets?

Ответить

I replayed this video - not because the explanations weren't clear. I just wanted to hear the song again haha

Ответить

this is probably how education is gonna be in the future, thanks a lot!

Ответить

thank you

Ответить

so good explained!! way better than my ml prof :D thx, good examples, good vid

Ответить

Valeu!

Ответить

Is the intro song inspired by smelly Cat by Phoebe Buffay? :P

Ответить

One of the best videos I have come so far

Ответить

perfect

Ответить

Hi, As per some text books, bias is given to the network. Is it given or appears out of network?

Ответить

Keep making video like this… ❤

Ответить

StatQuest using the iMessage color scheme to keep our attention.... MVP🏆

Ответить

BAM!

Ответить

GTAT - GREATEST TEACHER OF ALL TIME

Ответить

I always love your intro music!

Ответить

You just simplify everything...great work...love from India❤

Ответить

Thanks!

Ответить

This was great!

Ответить

So for a model to overfit, it has to be non-linear? I mean can't a Linear model suffer from the overfitting problem?

Ответить

![VAN9003 Valorant Windows 11 [ EASY FIX ] 2024! VAN9003 Valorant Windows 11 [ EASY FIX ] 2024!](https://invideo.cc/img/upload/ZzVGVlJBSWJrbmU.jpg)