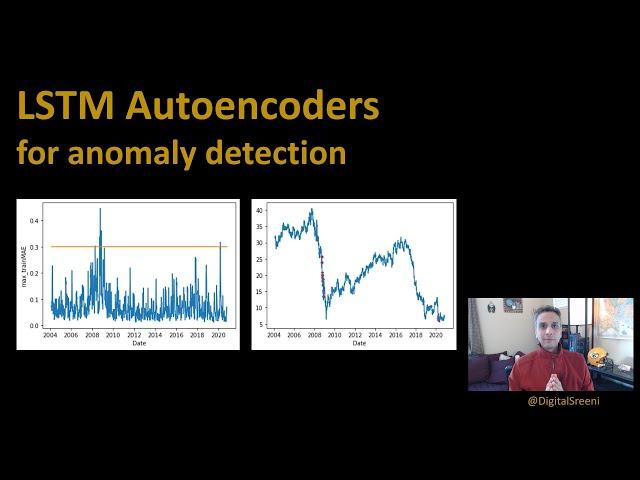

180 - LSTM Autoencoder for anomaly detection

Комментарии:

Thanks! But I don't understand why the model is trained to predict y, while the anomaly score is given based on MSE between y_pred and X. Shouldn't it be between y_pred and y?

Ответить

I believe it should be model.fit(trainX, trainX) instead of model.fit(trainX, trainY)

Ответить

Thank you for sharing !

What i can't understand here, is the part where we create the anoamly_df.

we know that for each sequence of 30 observations, we have a single MAE.

so how can i detecte which observation of these 30 is the anomaly within a sequence ?

that's a great video sir. although i got two things to say one is sir , it we be a great pleasure to vide only on time distributes and the other thing sir a query .

here we set return sequences as false and then used a repeat vector so that we can stack a LSTM layers again .

but cant we just use repeatvector as True in first layer so that we can eliminate that repeatvector layer .

the thing using repeat vector is it a thing particular to autoencoder using LSTM or it is just an experimental thing tried for for better accuracy, i mean we can also try setting return sequences as true and remove repeat vector layer?

Isn’t this trained on next value regression and not reconstruction? Seems like you just mix the architectures and do next value prediction and then evaluate based on the regression error

Ответить

I have a multivariate dataset with 86 dimensions, instead of 1 like in the video.

How do I compute the MAE in this case?

Firstly thanks. My question is that when input is 30*1 means 30 then how can be output 128 while in autoencoder we compress data then decode for example 30 to 15 to 10 then decode

Ответить

Your content is awesome, it's really helping me to understand more concepts about ML because you don't only stand with the theory but you moving through the practice (that's pure gold for me). Thanks for sharing all of those knowledge with us !

Ответить

Hi Sreeni seems like you are feeding the neural network incorretly for autoencoder input and output should be same I traied with this code and got better results for the same

es = EarlyStopping(monitor='val_loss',patience=5)

history = model.fit(trainx,trainx,epochs=100,batch_size=32,validation_split=.1,callbacks=[es])

Epoch 16/100

235/235 [==============================] - 10s 43ms/step - loss: 0.0287 - val_loss: 0.1243

Hi, thanks for your video. Please, is there a way I can pull out the encoder compressed data with the original number of rows for supervised learning? I have actually tried it and the size I got was just the sample size instead of the size of the original number of rows.

Ответить

So inspiring! Well done. How do we get the codes please?

Ответить

Well done! Thanks for this nice video! Greetings from Germany

Ответить

The best video i have ever seen, great

Ответить

Thanks alot for these videos. Just a question, should trainMAE not be calculated with trainY instead of trainX? Im a bit confused.

Ответить

Thank you for this valuable video!

Is it necessary to perform a standardization (via StandardScaler methods) as there is only one feature ?

if I wanted to use the lstm autoencoder having in input a dataset containing some text and not a temporal sequence, can it be done?

for example with a dataset containing fake news

I have got error in the end

y = scaler.inverse_transform(test[timesteps:].Open),

Expected 2D array, got 1D array instead

I also tried to reshape but still got a same error

so could you help me with this

How can we compare different models how it went when there are no labaled anomalies?

Ответить

Can you explain how to do the same with supervised anomaly detection with labeled multivariate dataset using LSTM

Ответить

how to do the same idea of anomaly detection but not for time-series data, for example, having clients in hospital and checking their health tests?

Ответить

Thank you. I could follow your story even though I am not a data scientist. You have unique skills of explaining something complex in simple words with good enough details.

Ответить

Thank you for a very informative video.

I have one question (anyone can answer it)

What advantage does autoencoders give for anomaly detection over classical ML algorithms?

I have a doubt here in autoencoders that output is also x then here why did you trained model with trainx and trainy. instead of train

x and train x

Sir could you please provide a video on LSTM Variational Autoencoder for multivariate time series.

Ответить

Wow this was fantastic! I didn't even know what an autoencoder was before watching

Ответить

In all other autoencoder videos you've done .fit(x,x). Why are you doing .fit(x,y) here?

Ответить

Thank you very much for the tutorial.

I have a problem with sns.lineplot (row 142). I always get below error. How can I fix it?

ValueError: Expected 2D array, got 1D array instead:

array=[0.57032452 0.37515913 0.19478522 ... 0.32379982 1.23183246 0.9894165 ].

Reshape your data either using array.reshape(-1, 1) if your data has a single feature or array.reshape(1, -1) if it contains a single sample.

i have problem with plotting anomalies (last task), How do I solve ValueError: Expected 2D array, got 1D array instead?

Ответить

How one feature can be 128 features... I couldn't understand here? (Input -LSTM1) @DigitalSreeni

Ответить

I am having trouble plotting testPredict and testX. I want to see the predicted curve.

Ответить

Sir I have Timeseries dataset in which time and vibration accelerationd have been recorded. So I have to classify the faults of tool on the basis of that dataset on the basis of LSTM. so how to use it.

Ответить

Can I use this method for clustering?

Ответить

I have a question, if I am working on a multivariate problems where i have 7 features in my data and I am using for eg. 6 features to predict 1 feature, how should I modify the code to output 1 feature since my trainX.shape[2] contains 6 features instead of 1?

Ответить

Thank you . Very clear explanation !!

Ответить

Hi Sreeni, Thanks for the great video. But I just curious to know that after you perform Standard Scaler transformation, how the type of train & test was in pandas data frame. It will be converted to numpy array, once you have done any transformation.

Ответить

why does your script did not work in my colab environment? train loss does not reduced down to 0.3 which is much bigger value than your video. for me. every value of "trainPredict" is near -0.5 whereas trainY is distributed -1~4.

Ответить

If the LSTM is reconstructing the same input sequence, why do you create an X and Y? Shouldn't the input and output be both the "X"?

Ответить

If I understand correctly, autoencoder are not able to detect reocurring patterns. If this anomalous drop would be something reocurring, is there a ways to take this into account?

Ответить

Thanks sir just video gave the starting point which was needed to work on (time series anomaly detection)

Ответить

When I see DigitalSreeni I know I'm in good hands

Ответить

Your explanation is simple but clear. Thanks for you effort.

Ответить

Subs Added, thanks for the wonderful video.

Ответить

Thank you for kindly sharing this.

Ответить

Thanks Sir for the videos. Do you have a tutorial on how we can use plotly that will give us at what events each anomaly corresponds ?

Thanks in advance

Extremely helpul. Thanks very much

Ответить

This is extremely helpful. Thank you very much.

Ответить

The video is interesting. I have a doubt.

1. Given the network is used to train a network where the input and output are the same, why are trainX and trainY given in the fit command.

Shouldn't it be trainX, trainX.

can i use lstm with video analysis to detect anomaly ?

Ответить

Thank you, it looks like GE got hit hard by the 2008-2009 economic crash and maybe by Covid-19 in 2020...

Ответить