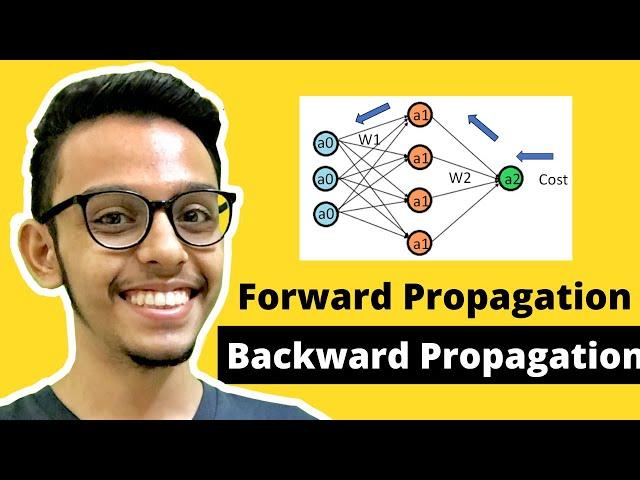

Forward Propagation and Backward Propagation | Neural Networks | How to train Neural Networks

Комментарии:

If you found this video helpful, then hit the like button👍, and don't forget to subscribe ▶ to my channel as I upload a new Machine Learning Tutorial every week.

Ответить

let bro cook

Ответить

Nicely explained. Keep up the good job!

Ответить

wait you haven't explained backpropagation at all

Ответить

Your videos are very helpful. It will be great if you sort the video..Thank you😇😇😇

Ответить

great video

Ответить

Fantastic explanation. Thank you

Ответить

y no subtitles?

Ответить

Literally best. Crisp and clear!! Thank you

Ответить

Very helpful and to the point and correct!

Ответить

Brother your explanation was great but there are some mistakes i have pointed out.

Ответить

best explanation, best playlists

I don't usually interact with the algorithm much by giving likes and dropping comments or liking but you beat me into submission with this. Hopefully I understand the rest of it too lol.

You explain better than popular course instructor on deep learning

Ответить

Best explanation I've seen so far

Ответить

B1 and B2 are initialized randomly too ?

Ответить

Absolutely loved the way you explain. So easy to understand. Thank you

Ответить

Excellent explanation jazakallah bro

Ответить

Please share code algorithm backpropagate

Ответить

so glad I found this channel!!

Ответить

Super sir. I have learned more information from this and also calculation way. It's very useful to our study. Thank you sir

Ответить

Can A* actually be Z*, e.g. A1 = Z1?

Ответить

I've always felt as if I was on the cusp of understanding neural nets but this video brought me past the hump and explained it perfectly! Thank you so much!

Ответить

Sir it's W ¹¹[¹] * a⁰[1] right? You've done it as W ¹¹[¹] * a¹[1] at the matrix multiplication, can you just verify I'm wrong?

Ответить

what is this B1

Ответить

Such a simple and neat explanation.

Ответить

This was actually pretty straight forward

Ответить

Small doubt, what is f(z1)...I am assuming these are just different type of activation functions...where input is just the weight of current layer*input from previous layers...is that correct?

Ответить

this video should be titled " Explain - Forward and Backward Propagation - to Me Like I'm Five. Thanks man you saved me a lot of time.

Ответить

Good job. But Gradient descent W2 and W1 mus be updated simultaneously.

Ответить

Amazing work, keep it going :)

Ответить

Thanks man. The slides were amazingly put up.

Ответить

Good Explanation !!

Ответить

you explained in very clear and easy ways. Thank you, this is so helpful!

Ответить

Isnt the equation : Z= W.X+B = transpose(W)*X + B.Hence the weight matrix what you have given is wrong right?

Ответить

hi can you put caption option

Ответить

Great video, and great explanation thanks dude!

Ответить

Im a bit confuse through the exponent notations since some of it were not corresponding to the other

Ответить

You are great. It will be very good if you continue.

Ответить

Your videos on neural networks are really good. Can you please also upload videos for generalized neural networks too, that would really be helpful P.S Keep Up the good work!!!

Ответить

Awesome, really helpful! Thank you

Ответить

thank u sir it was really helpful

Ответить

you are really awesome. love your teaching ability

Ответить

hi, how to calculate the cost?

Ответить

great video as always

Ответить

great video,

Please also make a video on SVM as soon as possible