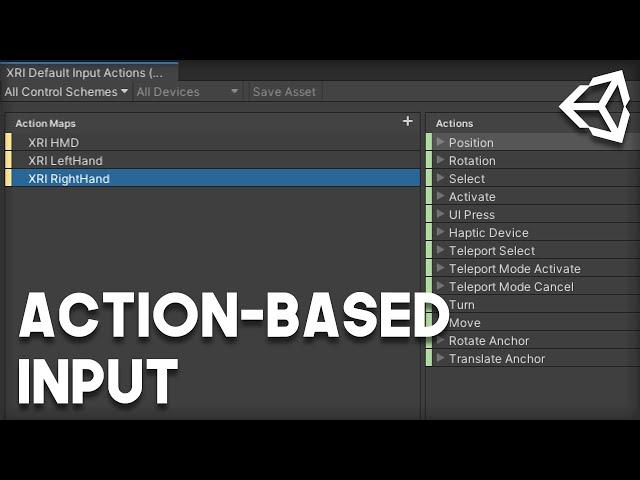

How to Setup XR Toolkit's Action-Based Input in Unity

Комментарии:

This is one of the most helpful Unity tutorials, I have ever seen! Thank you so much!

Ответить

But how do I bind a button to a custom function? I don't understand how to make the character sprint with the press of a button with this new system.

Ответить

nice to find this info but it's already outdated... everything changed so much since the time you made that video. It's unusable now...

Ответить

You're my hero, I was beating my head against the terrible default Unity instructions on this all week. Cheers man.

Ответить

How do you assign new actions to controls?

Ответить

great explanation of this system, even after two years.

Ответить

Thank you Andrew! Your video has saved me a lot of time! :)

Ответить

Pure gold! I was having so much trouble and I was missing adding the Input Action Manager script. Thanks so much!

Ответить

Currently trying to get a button click working and it isn't. A video on that would be sweet.

Ответить

Awesome, Would like to use movement with Actionbased XR, any vids?

Ответить

Great video. Thanks!

Ответить

Oh, man, thank you! I was missing the Input Action Manager. Great channel, very useful for beginners like me.

Ответить

Briliant

Ответить

thank you so much bro, for this detailing

Ответить

Might be a stupid question, but when I click RMB in Hierarchy it shows only XR options without "(Action-based)", what could be wrong? Also when I click on Default Input Actions Presets - Inspector says "Unable to load this Preset, the type is not supported" =(

Ответить

Based.

Ответить

I updated the XR Interaction Toolkit to import the Default Input Action. However, as soon as I imported it, I saw the function [protected void OnSelectEnter (XRBaseinteractor)] part of Interactables' AxisDragInteractable.cs error occurred. I want to ask if there is a solution.

Ответить

Thank you!

Ответить

best tutorial for biginner of xr toolkits . very awesome!

Ответить

Thank you for this video!!

This is stuff that should be in the official docs to begin with.

Right Click > XR > "Convert Main Camera to XR Rig" ... that is my only option. What am I doing wrong?

Ответить

O M G! You actually go to the documentation and explain all the "whys"! This is so awesome!

Ответить

How do I use this if I want a debug.log when I press A,B X or Y on my Quest2? Do you have a script for that? Been searching for this info the past 4 days, can't move on until I know how.

Ответить

Can you do hand-tracking with this? i.e. Quest 2

Ответить

How do you detect button presses? Like the X and Y buttons on the oculus quest controllers for example?

Ответить

I was having issues making code run when the primaryButton on the right controller was pressed. After a real long time messing with this I was able to get it working, wanted to share in case someone else was having this issue. First, go double click your XRI Default Input Actions asset. Click XRI RightHand in the left column, and then click the + symbol in the middle column to create your action. I named mine Test, click it and set the Action Type in the right colum to button, then click the + symbol next to Test and click Add Binding. Click the new binding, and then in the path parameter in the right column navigate to the primaryButton for the XR controllers, check the "Generic XR Controller" checkbox. Once the Test action is created in the input action window you have to add it to the ActionBasedController script, this is what was throwing me off. Open that script and add the following, this will allow you to reference the Test action in other scripts:

[SerializeField]

InputActionProperty m_TestAction;

public InputActionProperty testAction

{

get => m_TestAction;

set => SetInputActionProperty(ref m_TestAction, value);

}

Once you have done that you can reference this action in code. The buttons are weird with this system btw too, the button press will return a float and not a bool like you would think. To make something happen when the button is pressed do if (buttonPressed > 0) instead of if(buttonPressed == true), you could also create a function and have it run when the button is triggered here is the code I used:

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.InputSystem;

using UnityEngine.XR.Interaction.Toolkit;

public class ActionInputTest : MonoBehaviour

{

private float buttonPressed; //bool that stores true or false if button is pressed

private ActionBasedController controller;

private void Start()

{

controller = GetComponent<ActionBasedController>();

}

void Update()

{

buttonPressed = controller.testAction.action.ReadValue<float>();

if (buttonPressed > 0)

{

Debug.Log("Button Pressed");

}

}

}

I have watched your videos on the action based input system multiple times but I am still confused on one thing. If you for example create an action called "test" and bind it to the primary button of the right hand controller, how can you do something when that button is pressed? I'm trying to reference a variable storing the ActionBasedController component, called controller. I can for example do bool buttonPressed = controller.selectAction.action.ReadValue<bool>(); to see if the select action is being pressed, but when I replace select with test, test beign the action I created in the action window, it wont work. Is there something else I have to do to check if an action I added is being pressed? Thx.

Ответить

Nice Tut.

Is there a way to control the XR Rig via Android Smartphone?

I tried to use:

device rotation[HandheldARInputDevice]

device rotation[OpenVR Headset]

attitude[Sensor]

But via Unity Remote 5 nothing happens.

Do I have to set up my device differently?

THANK YOU ANDREW YOU LOOK AFTER US HOMIE, DIVINES BLESS YOU MY MAN

Ответить

extremely helpful tutorial. combines a lot of info in a short time. great work!

Ответить

Sweet! This is supported natively now! 5 months before this video came out, i had Modified XR's Controller script to have public faceing functions so i could pass though Select and Activate actions via script. In a hakish way i could still use unitys Input system for all the controlls, and still pass them through to VR. Allowed me to simultaneously have keyboard, mouse, gamepad and oculus controller trigger things like teleportation. After seeing this video, i can now revisit this and do it properly without the need for modified library code as this type of functionality is now natively suported between the input system with the XR toolkit! Thanks!

Ответить

JEEZ! THANKS

Ответить

How can i read the buttons values from a script?

Ответить

Thanks for showing us the way Man. Such great content. Really appreciated.

Ответить

so there's a rotation "action" but how do you actually get the rotation out of it ? the only parameter is a callback:

controller.rotationAction.action.performed += (UnityEngine.InputSystem.InputAction.CallbackContext obj) => { };

Do you change the Main Camera's position on it's transform or the Camera Offset's transform? Thx

Ответить

Is there a tldr on how to setup a new action? I'm trying to subscribe to a primary button action, set it up in the action menu and mapped it to generic XR left hand. How can I access this in code to subscribe to the event, though?

Ответить

Thanks, it helps me a lot.

Ответить

Thank you so much! Im just getting started with Unity and vr-Dev and its so frustrating that the Unity-interface has changed so much in one year and its hard to set the inputs right with all the different Versions people in Tutorials explain! So thankful to find you explaining it propperly for the setup in 2021.

Ответить

Thank you for the video! It helped a lot. Just wondering if there is an easy way to also add primary and secondary buttons because I noticed they were not added by default

Ответить

I'm running into a problem with the action-based XR rig. I'm on an Oculus Quest 2 in Unity 2020.2. When I build and run, the scene is locked in one position. Moving my head does nothing. My controllers are also locked onto the headset's position. As a check, I ran Unity's example VR scene and had no problems on my headset. I've tried everything I can think of: building in a fresh version of Unity, building in the URP instead of 3D, and double-checking the project settings. I also deselected everything in the Unity example scene and rebuilt the room-scale action-based XR rig in there, and it worked. I must be missing a setting somewhere, but I don't know what it is. Does anyone have any idea what might be causing this, and how to fix it?

Ответить

This is great, thank you!

Now I am having issues using the XR Device Simulator when trying to control the virtual HMD. I am trying to apply the same principals but I can't seem to get it.

now this is what we call progress

Ответить

Thank you very much for this, very helpful.

Ответить

Awesome! Can't wait for an update to the interaction system with this new method, especially the new hand interaction / posing stuff. Also really happy to finally be able to leave the legacy vr system for cross platform setup.

Ответить

How can I read trigger value with the new input system? meaning I want to poll in update for the current amount the trigger is pressed, giving values between 0-1. The Activate action in the default set seems to only be a button.

Ответить

Thanks for the info, I have been struggling for hours before watching your video. Now I could finally make the controllers work! However I have a problem with the camera, it doesnt seem to be tracking, not even the rotation. I have the project set with the new input system and followed your instructions. Headset is an Oculus Quest 2. Any suggestion? Thanks!!

Ответить

Thank you so much man!

Ответить