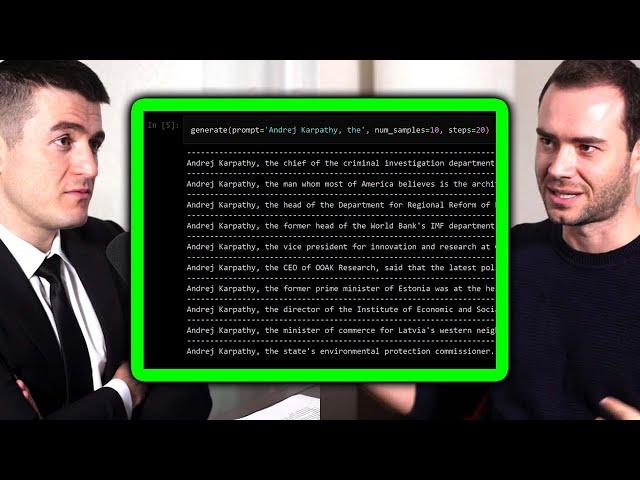

Transformers: The best idea in AI | Andrej Karpathy and Lex Fridman

Комментарии:

Great interview. Engaging and dynamic. Thank you.

Ответить

The attention name was surrounding there in the past on other different architectures. It was common to see recurrent bidirectional neural networks with "attention" on the encoder side. That's why the name "attention is all you need" comes from. That because it basically deletes the need of a recurrent or sequentially architecture.

Ответить

Dr. Ashish Vaswani is a pioneer and nobody is talking about him. He is a scientist from Google Brain and the first author of the paper that introduced TANSFORMERS, and that is the backbone of all other recent models.

Ответить

The model YOLO (you only look once) is also an example of a "meme title"

Ответить

Andrej's influence on the development of the field is so underrated. He's not only actively contributing academically (i.e. through research and co-founding OpenAI), but he also communicates ideas so well to the public (for free, by the way) that, he not only helps others contribute academically to the field, but also encourages many people to get into it simply because he manages to take an overwhelmingly complex (at least for me it used to be) topic such as the Transformer and strips it down to something that can be (more easily) digested. Or maybe that's just me - as my professor in my undergrad came no where near to an explanation of Transformers that it as good and intuitive as Andrej's videos do (don't get me wrong, [most] professors know their stuff very well, but Andrej is just on a whole other level).

Ответить

So the last 5 years in AI are "just" dataset size changes while keeping the original Trasformer Architecture (almost) ?

Ответить

Incredibly interesting and thought provoking. But still disappointed this wasn't about Optimus Prime

Ответить

Damn. That last sentence. Transformers are so resilient that they haven't been touched in the past FIVE YEARS of AI! I don't think that idea can ever be overstated given how fast this thing is accelerating...

Ответить

Self-attention. Transforming. It's all about giving the AI more parameters to optimize what are important internal representations of the interconnections between data itself. We've supplied first order interconnections. What about second order? Third... or is that expected to be covered in the sliding window technique itself? It would seem the more early representations we can add the greater we can couple to "the data" complex/nuance. At the other end, the more we couple to the output, the closer to alignment we can achieve. But input/output are fuzzy concepts in a sliding window technique. There is no temporal component to the information. The information is represented by large "thinking spaces" of word connections. It's somewhere between a CNN like technique to parse certain subsections of the entire thing at once, to a fully connected space between all the inputs. That said sliding is convenient as it removes the hard limit of what can be generated and makes for an easy to understand parameter we can increase at fairly small cost our increase our ability to generate long form representations exhibiting deeper level nuance/accuracy. The ability to just change the size of the window and have the network adjust seems a fairly nice way to flexibly scale the models, though there is a "cost" to moving around IE: network stability meaning you can only scale up or down so much at a time to maintain the most knowledge incurred from previous trainings.

Anyway, the key ingredient is, we purposefully encode the spatial information (to the words theme-selves) to the depth we desire. Or at least that's a possible extension. The next question of course is which areas of representation can we supply more data that easily encodes within the mathematics of information we think is important to be represented in the information (that isn't covered by the processes of the system itself (having the same thing represented in multiple ways (IE: the Data + the system) ) is a path to overly-complicated systems in terms of 'growth/addendums". The easiest path is to just represent in the data itself. And patch it. But you can do stages of processing/filtering along multiple fronts and incorporate them into a larger model more easily, as long as the encodings are compatible (which I imagine will most greatly affect the growth of these systems/swapability though standardized ).

Ideally this is information that is further self-represented within the data itself. FTT are a great approximations we can use to bridge continuous vs discrete knowledge. Though calculating it on word encodings feels a poor fit, we could break the "data signal" into an individual chosen subset of wavelengths. Note this doesn't not help in the next word prediction "component" of the data representation, but is a past knowledge based encoding that can be used in unison with the spatial/self-attention and parser encoding to represent the info (I'm actually not sure of the balance between spatial and self-attention except that the importance of the token in the generation of each word to the previous word (along with a possibly a higher order of inter-connections between the tokens) is contained within the input stream). If it is higher order than FFT's may already be represented and I've talked myself in a circle.

I wonder what results dropouts tied to categorization would yield on the swap-ability of different components between systems? Or the ability to turn various bits/n/bobs on/off in a way tied to the data? I think that's how one can understand the partial derivative reverse flow loss functions as well, by turning off all but one path at a time to split the parts considered, but that depends on the loss function being used. I imagine categorization of subsections of data to then spit off into distinct areas would allow for finer control on representations of subsystems to increase scorability on specific test without affecting other testing areas as much. Could be antithetical to AGI style understanding, but it allow for field specific interpretation of information in a sense.

Heck. What if we encoded each word as their dictionary definitions?

What is meant by "making the.evaluation much bigger"?

I do not understand "evaluation" in this context.

Attention is all you need?

No,

🎼🎶All You Need Is Love 🎶

Any top AI expert that is not a member of the tribe?

Ответить

When he says the transformer is a general-purpose differential computer does he mean in the sense that it is Turing complete?

Ответить

Transformers is not so good a movie

Ответить

well, this clip was great until it ended abruptly in mid-sentence. PLEASE! I signed up for clips, but I am frustrated to have missed a punchline here from Karpathy explaining how GPT architecture has been held back for 5 years. I have SO little time to now scan the full version! But at least you linked it. Your sponsor has left me sleepless.

Ответить

"Don't touch the transformer"... good advise regardless of what kind of transformer you're taking about.

Ответить

I don't think so 😕

Ответить

Introduce myself my name is Ariful Islam leeton im software developers And developer open AI

Ответить

The way this guy thinks and speaks reminds me of Vitalik Buterin. What do they have in common? High intelligence is not the only factor here.

Ответить

So instead of pure intuition -- convolutional neural nets -- we have moved to something more like consciousness.

Ответить

If I understand correctly, transformers = good. N'est pa?

Ответить

What?

Ответить

Andrej speaks on my level of speed. Lex is more concise but packed with knowledge in each word. So speaking em slower lets the listener build sort of neural net of relativity in their brain all done automatically which is only limited by knowledge and experience.

Ответить

Lex low IQ is insuferable but he has the skill of getting good guests at least

Ответить

Lex Fridman, you seem bored and uninterested. Holding your head up with your hand. You have Andrej in front of you, be professional. ;-)

Ответить

I like your funny words magic man

Ответить

Any guess why Vadswani is ignored?

Ответить

We as a field are stuck in this local maxima called Transformers (for now!)

Ответить

Transformers truly are more than meets the eye …

Ответить

What absolute rubbish!!

Transformers are either Autobots or Decepticons. I don’t know what he was talking about but get a clue dude!

I loved them as well but after the first 2 films it really got boring

Ответить

The paper is called "Attention Is All You Need", and IMHO attention is what made transformers so successful, not its application in the transformer architecture.

Ответить

...i am dissapointed it's not about optimus prime

Ответить

Long time no see badmephisto!

Ответить

Question:

without the optimization algorithms designing the hardware manufacturing is there reason to believe that the fundamental nature of these mechanisms reflect the inherent medium of computation? Nope. I guess not.

They're watching it.

It’s self attention ⚠️

Ответить

and the greatest thing about it is that you do not need it

Ответить

Saving that one to see if I can understand wth Andrej is talking in a year.

Ответить

Attention is truly all you need.

Ответить

I had to check a few times if my video speed was not set to 1.5x

Ответить

Recurrence all you need.

Ответить

This makes me slightly freaked out that we don’t really understand what we’re developing….

Ответить

Does any one have a simple definition for “general differentiable computer”?

Ответить

Is this guy a robot...

Ответить

![[Урок Revit] Revit & BIM. С чего начинать новичкам. Логика работы. [Урок Revit] Revit & BIM. С чего начинать новичкам. Логика работы.](https://invideo.cc/img/upload/OEU4Vjk2NUtQOGE.jpg)

![GHOSTEMANE - Hades [Official Video] (Dir. by @Maxdotbam) GHOSTEMANE - Hades [Official Video] (Dir. by @Maxdotbam)](https://invideo.cc/img/upload/c3AzWEVxRzdsQk4.jpg)