The complete guide to Transformer neural Networks!

Комментарии:

The link to the image and it’s raw file are in the description. If you think I deserve it, please give this video a like and subscribe for more! If you think it’s worth sharing, please do so as well. I would love to grow to 100k subscribers this year with your help :) Thank you!

Ответить

this was a brilliant video!! super comprehensive

Ответить

Bro all of my Confusion vanished like vanishing Gradient.

Thanks. Really worth it.

Thank you so much for taking the time to code and explain the transformer model in such detail, I followed your series from zeros to heros. You are amazing and, if possible please do a series on how transformers can be used for time series anomaly detection and forecasting. it is extremly necessary on yotube for somone!

Ответить

You explain really well! I think its quite complex but as you explained it, it has become more clear. I think with the coding video, it is extremely useful

Ответить

Can this be done in pure C++

Ответить

Life saver, thank you

Ответить

Very well explained. Thank you.

Ответить

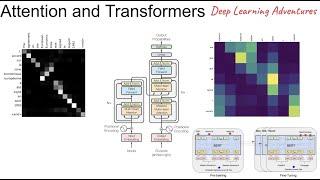

i like your visualization of the matrixes. those residual connections and positional embeddings were good details to mention here

Ответить

background music create lot of disturbance and especially that pop out sound otherwise content delivery is best

Ответить

The way you approach this topic make it so easy to understand, and I appreciate the pace of your talking. Best content on transformer.

Ответить

Very good. In general articles don´t show the dimensions when explaining. It helps a lot. Tks

Ответить

very well 👍

Ответить

So you're from the silicon valley of India. We all now it

Ответить

what is the use of feed forward network in transformer ..please answer

Ответить

Would be nice a video like this explaining LLAMA model

Ответить

Great! Still a bit too hard for me but i still learned stuff.

Question, would it be possible to use the same encoder accross multiple languages ? without retrainning it after the first time, i mean.

My friend Ajay, your playlist "Transformers from scratch" is great. It was very appealing to me to see your block diagram representation. Waiting with great anticipation for the final video. Would you be able to make it available soon?

Ответить

Damn, could've used a few weeks ago for my OMSCS quiz. Solid review though, nice job!

Ответить

Will have to brush up my basics and then come back to this.

Ответить

Do a video on this new model. Called RWKV-LM.

Ответить

Lovely brother. I am your Neighbour Tamizhan. Lovely brotherhood

Ответить

hopefully the series is completed soon ❤️ would binge watch 😁

Ответить

Eagerly waiting for the upcoming videos in the series.

Ответить

Amazing explanations throughout the series, and top-notch content, as always. Waiting for a detailed explanation/visualisation of the backward pass in the encoder/decoder during training. I would appreciate it if you were thinking in the same way.

Ответить

amaaazing

Ответить

Great channel and very useful video, thank you very much! I will watch other videos of your channel as well.

I have a question. After you perform layer normalization obtaining an output tensor, how do you give a three-dimensional tensor as input to a feed forward layer?

Do you flatten the input?

Masked multihead attention is for decoder right. Is that a typo in your encoder architecture.

Ответить

Please can you apply transformers which you have built on text summarisation. It is really helpful.

Ответить

concise

Ответить

Really well presented.

Ответить

Without bci multi head attention process possible with human brain?

Ответить

If I can recommend a next steps to this series, going into Bert, GPT, and DETR would be lovely extensions

Ответить

Very well explained 👍

Ответить

You're explanation is the most realistic explication of the Transformer that I've ever seen in the internet.

Thanks dude.

What're in the feed forward layers? Just an input and output layer? Are there hidden layers? What are the sizes of the layers?

Ответить

Thanks!

Ответить

Great overview! Thanks for taking the time to put all this together!

Ответить

Amazing❤ Salute to the dedication in making this video, visual explaination and knowledge.

Ответить

Video quality is amazing.

Keep it up, buddy!

amazing fluent in english speak like native speaker

Ответить

Amazing <3

Ответить

First :)

Ответить

THIS IS AMAZING ,helped me a lot thanks :)

Ответить

![Schnappi das kleine krokodil [DRILL] *BEST VERSION* Schnappi das kleine krokodil [DRILL] *BEST VERSION*](https://invideo.cc/img/upload/SW9saGJBclFxV0Q.jpg)