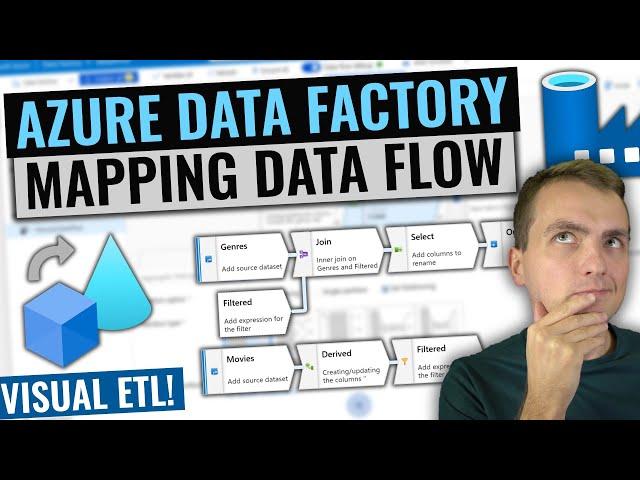

Azure Data Factory Mapping Data Flows Tutorial | Build ETL visual way!

In this episode I give you introduction to what Mapping Data Flow for Data Factory is and how can it solve your day to day ETL challenges. In a short demo I will consume data from blob storage, transform movie data, aggregate it and save multiple outputs back to blob storage.

Sample code and data: https://github.com/MarczakIO/azure4everyone-samples/tree/master/azure-data-factory-mapping-data-flows

Next steps for you after watching the video

1. Azure Data Factory introduction video

- https://youtu.be/EpDkxTHAhOs

2. Check mapping data flow documentation

- https://docs.microsoft.com/en-us/azure/data-factory/concepts-data-flow-overview?WT.mc_id=AZ-MVP-5003556

3. Helpful tips and samples

- https://github.com/kromerm/adfdataflowdocs/blob/master/data-flow-expression-samples.md

### Want to connect?

- Blog https://marczak.io/

- Twitter https://twitter.com/MarczakIO

- Facebook https://www.facebook.com/MarczakIO

- LinkedIn https://www.linkedin.com/in/adam-marczak/

- Site https://azure4everyone.com

Тэги:

#Azure #Data_Factory #Data_Flow #Azure_4_Everyone #Adam_Marczak #Mapping_Data_Flow #Spark #ADF #big_data #SSISКомментарии:

can you pyspark or sql in Expression functions ?

are only scale

I love you, Adam!

I have been struggling with using expression builder in Data Flow. I can't seem to figure out how to write the code. This video just made it look less complex. I'll be devoting more time to it.

Great! You are the best Adam.

Ответить

Perfect

Ответить

Thanks!

Ответить

I owe you my paycheck tbh 😅🤣

Ответить

best video on azure I have ever seen❤❤

Ответить

Will it work with pipe (“|”) separated value file instead of csv?

Ответить

Hello Adam , i follow these steps but i have a problem : i didn't find the source columns when i go to derived column component to write expression based on existing column. in your video , total columns in source component show = 3 , for me =0 ? i changed the source from csv to sql table and i didn't found the solution.

Ответить

Great video! Most videos seem to focus mostly on the evertisement material straight from Azure. At best they show you the very dumb step of copying data from a file to DB.

This is the first video I saw where you actually show how you can do something useful with the data and close to real life scenario.

Thank you.

Good explanation there.

Ответить

HELLO I"M FROM RRRRRRUSSIA

Ответить

Thank you Adam.

Ответить

very nice

Ответить

Very good explaining the Data Flow. Thanks Mr.Adam.

Ответить

👍 Its amazing , Practical implementation of Data Flow.

Ответить

Thanks for such good video

Ответить

It must be very challenging to do all this thing in English for you I imagine, Adam! Congratulations for pushing through despite the difficulty. 🙂

Ответить

Amazing Video, we want other parts !

Ответить

Video is excellent. I want to know the problem statement which Data flow is solving?

Ответить

Nice video.

Just curious. Can you explain toInteger(trim(right(title,6),'()')) in detail please. Like how this command executes?

Very well explained and demonstrated. Really helpful to get started with Data flows.

Ответить

Thank you, Adam. As always, you rock.

Ответить

Great tutorial

Ответить

So helpful! Thank you very much Adam!

Ответить

I really like your tutorials. I have been looking for a "table partition switching" tutorial but haven't found any good ones. May be you could do one for us? I am sure it'll be very popular as there aren't any good ones out there and it is an important topic in certifications :-)

Ответить

Great video.

Question: Under "New Datasets", is there a capability to drop data into Snowflake? I see S3, Redshift, etc.

I appreciate the video and feedback!

an error message e.g. handshake_failure when the data flow source retrieve data from API, can anyone help? thanks.

Ответить

Excellent tutorials

Ответить

So nice of your talent explaining the data flow in simple way. Thank you so much Mr.Adam.

Ответить

Adam, great video.I m new to Data Flow and I have one doubt, I want to implement File level checks in Data Flow but not able to do it. All tasks are performing data level checks like exist or conditional split. Is it possible to implement File level check like whether file exist or not in storage account?

Ответить

Great

Ответить

Your videos are really great and helped me understand lot of concepts of Azure. Can you please make one using SSIS package and show how to use that within Azure Data Factory

Ответить

Hello Adam, thanks a bunch for this excellent video. The tutorial was very thorough and anyone new can easily follow. I do have a question though. I am trying to replicate an SQL query into the Data Flow, however, I have had no luck so far.

The query is as follows:

Select ZipCode, State

From table

Where State in ('AZ', 'AL', 'AK', 'AR', 'CO', 'CA', 'CT'...... LIST OF 50 STATES);

I tried using Filter, Conditional Split and Exists transforms, but could not achieve the desired result. Being new to the Cloud Platform, I am having a bit of trouble.

Might I request you please cover topics like Data Subsetting/Filtering (WHERE and IN Clauses etc.) in your tutorials.

Appreciate your time and help in putting together these practical implementations.

Nice one Adam. Cool one. Keep doing fabulous videos always fella.

Many THanks.

Your channel is totally underrated, man

Ответить

How do you delete from target based on data from the Source? I'm really struggling to understand if i have a column with a value that I want to delete in the target table. Everything seems to be geared up to altering source data coming in

Ответить

best tutorial ever... 💪🏻💪🏻💪🏻

Ответить

Would you plan to make video for introduction of each transforamtion components? Thanks

Ответить

Does any of these option changed now? Because I am not able to see any data debug option to be enabled, and directly preview data in dataset itself.

Ответить

-1979 and ,12

This is why complex logic is needed. Nice tutorial :)

Hi Adam, Thanks for making this videos, very clear and concise. I have a question (sorry not related to this video) regarding Conditional split - Can the output stream activities, run in parallel ?

Ответить

Great video! Thanks Adam!

Ответить

I need to join header with data. header is dynamic. how can i retain the order of merge ?

Ответить

Wouldn't it be simpler to do all of this using code.

Ответить

very good explanation Adam. keep it up.

Ответить

Can you how to add the aggregation column to the same output?

Ответить

Adam, excellent presentation of ADF concept. I find all your videos really helpful in understanding the ADF concept. One question in regards to the sink dataset in dataflow, how can I create dynamic folder in my blob storage based on the year, month and day when this dataflow was triggered?

Ответить

excellent explanation with simple scenario. Thank you.

Ответить