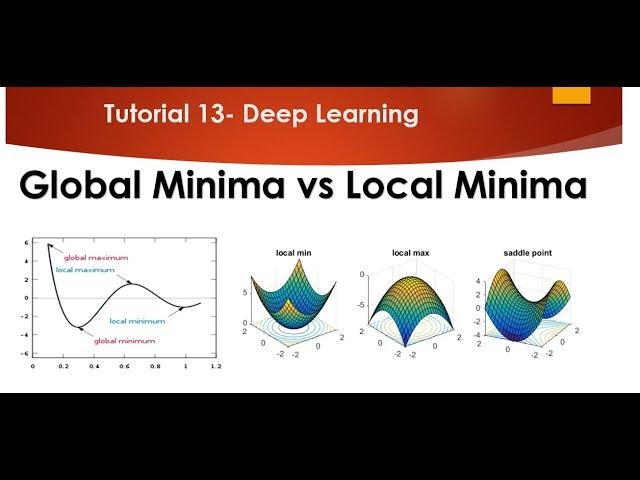

Tutorial 13- Global Minima and Local Minima in Depth Understanding

Комментарии:

Stopped this video halfway through to say thank you! Your grasp on the topic is outstanding and your way of demonstration is impeccable. Now resuming the video!

Ответить

what if i have a decrease form 8 to infinity, would the lowest visible point still be my global minima?

Ответить

amazing. simple, short & crisp

Ответить

nice explanation Sir

Ответить

i like this video you explained this very well! thank you!

Ответить

You are always awesome! Thanks Krish Naik

Ответить

at global minima if the deriavtive of the loss function wrt w becomes 0 then wold=wnew and lead to no change in value then how the loss function value be reduced?

Ответить

Hello Krish,

Thanks for the amazing work you are doing.

Quick one: you have talked about the derivative being zero when updating the weights...so how do you tell it's a global minima and not the vanishing GD problem?

Hi Krish,when the slope is zero at local maxima why don’t we consider local/global maxima instead of minima

Ответить

Why do we need to minimize cost function in machine learning, what's the purpose of this? Yeah, I understand that there will be less erorrs etc., but I need to understand it from fundamental perspective. Why don't we use global maximum for example?

Ответить

very impressive explanation. Now I total adapt to India English. So wonderful

Ответить

Awesome!

Ответить

Thank you, Krish sir. Good explanation.

Ответить

You are awesome... One of the gems in this field who making others life simpler.

Ответить

this is sooo easily understandable sir.. Im sooo lucky to find you here.. thanks a ton for these valuable lessons sir.. keep shining..

Ответить

Hi Krish. Thanks a lot for your videos. You make me fell love with DL❤️ I took many introductory courses in coursera and udemy from which I couldn't understand all the concepts. You're videos are just amazing. One request, could you please make some practical implementations of the concepts so that it would be easy for us to understand in practical problems.

Ответить

Thanks Krish

Ответить

Hi Krish, very informative as always. Thank you so much. Can you pls also do a tutorial on Fokker Planck equation...Thanks alot in advance...

Ответить

Am self studying machine learning. Really your videos are amazing to get the full overview quickly and even a layman can understand.

Ответить

If at global minima w'new is equal to w'old ,what is point of reaching there ?? am I missing something?? @krish naik

Ответить

I don't think if the derivative of loss function for calculating new weights should be used as when equal to zero it makes the weights for the neural networks to W(new) = W(old). It would be related to vanishing gradient problem. Isn't it like the derivative of loss function for the output of neural network used where the y actual and y hat becomes approximately equal and the weights are optimised iteratively. Please make me correct if I'm wrong.

Ответить

krish bro when the w new and w old are equal then that will be forming the vanishing gradient decent right??

Ответить

You making people fall in love with Deep learning.

Ответить

You have mentioned in previous video that you will talk about Momentum in this video but i am yet to hear....

Ответить

Very very amazing explanation thanks a lot!!!

Ответить

Never Skip Calculus Class.

Ответить

How do we deal with local maxima I am still not clear

Ответить

I think, at local minima the "∂L/∂w" is not = 0, bcz the ANN output is not equal to the required output. if I am wrong please correct me

Ответить

Hi Krish, .That was also a great video in terms of understandingPlease make a playlist of practical implementation of these theoretical concepts.Then please download the ipynb notebook just below so that we can practice it in jupyter notbook

Ответить

why do Neurons need to get converge at global minima ?

Ответить

Nice explanation krish sir ..........

Ответить

Ultimate explanation, thanks Krish

Ответить

Krish bhaiya, you are just awesome. Thanks for all that you are doing for us.

Ответить

your videos are like a suspense movie. need to watch another, need to see till the final playlist.. so much time to spend to know the final result.

Ответить

nice

Ответить

Awesome...

Ответить

Hi krish,your all video are too good.But do some practicle example on those videos so we can understand how to implement it practically.

Ответить

very nice

Ответить

Nice Explanation Krish Sir ...

Ответить

Very nice explanation

Ответить

![[FREE] Summer Walker x SZA "No Tellin" [FREE] Summer Walker x SZA "No Tellin"](https://invideo.cc/img/upload/UTh1ZmNzRXAzdC0.jpg)

![[FREE] SUMMER WALKER x JHENÉ AIKO TYPE BEAT - "WAITIN ON YOU" [FREE] SUMMER WALKER x JHENÉ AIKO TYPE BEAT - "WAITIN ON YOU"](https://invideo.cc/img/upload/NHR6U3JRN0xxaEY.jpg)

![Scorpions - Unbreakable (2004) (LP, Germany) [HQ] Scorpions - Unbreakable (2004) (LP, Germany) [HQ]](https://invideo.cc/img/upload/QXptcUVTUmZqcHg.jpg)