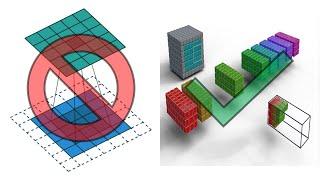

All Convolution Animations Are Wrong (Neural Networks)

Комментарии:

Your videos are very cool! I wonder if you thought about how to present Conv3d, it is a challenge when considering more than one channel

Ответить

I don't really care about the animations, the problem is when they start describing convolutions as 2D operations and don't go into detail on the effect of having multiple input and output channels.

I wish I found this video sooner, but anyway it's easy enough to derive the solutions yourself from 200 google search results. ( Google really sucks nowadays ).

It's actually a good mental excercise to imagine the 3d/4d filter sliding across batch of images... But good luck finding a correct padding for strided convolutions during backpropagation of both Conv and TransConv layers... I had to derive everything by hand, because internet has incorrect and even worse conflicting formulas for that... 😂

Is a cube in the filter (or image) a pixel? Or is it a combination of channels?

Ответить

3D, what tool are you using? Blender?

Ответить

Amazing work 😍

Ответить

Instead of spending 95% of the video ranting about how other animations are bad, I would have appreciated it more if you had spend that time explaining how this animation works. I don't think I learned anything from this video.. How do you go from an input RGB image of size W * H * 3, to some cube of size 5 * 5 * 5 (+padding)? You lost me at step 1..

Ответить

amazing. you have cleared all my doubts in single shot

Ответить

tysm

Ответить

Thank you so much for this! worth mentioning that the animation has a stride of 2

Ответить

They are not wrong. They just show a special case. They use the special case because the focus is on things like stride, dilation, padding etc.

It's good to make the 3D tensor animations, but don't call the existing ones wrong. I think I would have still found it easier to understand the existing ones first and then move on to the 3D animations.

This is what I expected for a long time. This explains everything clearing. Thanks for posting this.

Ответить

this conv2d animation you do is right, thanks alot

Ответить

Bravo !

Ответить

Слышу

Ответить

Geonodes were easier?

Ответить

Best video about neural convolution and filters!? YES!!!

Thank you so much!

Any plans to add your animations to Wikimedia commons? :)

Ответить

Thanks so much for this. Also really struggled to get proper animations. Would have liked to see how this looks in the actual neural network. i.e. how the filter can be visualized as the weights. Or show who the filter parameters are trained. Would greatly appreciate a video of GAN and LSTM. The LSTM diagrams are terrible. Really struggled to visualized how they connect to the overall network

Ответить

I find these "new and correct" animations confusing, I have no idea what's happening there. I assume this is just "the correct way to display convolution" for AI models? As an old school person who used convolutions mainly for 2D image processing (blur/edge detection) I don't see anything wrong about the old animations, that's exactly what we used to do there.

Ответить

you should've started with the typical RGB 3 layer input image, and animate convolutions on that; that's where most people start to get lost as to how the weights match with inputs, translating from the 2D mental model to 3D.

Ответить

Amazing!

Ответить

Honestly, just write down the formula…

Nice work though!

Nice animation, are you planning on making animations for Transformers as well?

Ответить

Thank you! Great animation. However, I do have a technical nit pick. Your animation shows an operation known as cross-correlation, which is related to convolution, but it is mirrored. "Convolutional neural networks" use cross-correlations in the feed-forward phase and convolutions in the backpropagation phase.

Ответить

Adding a bias term added after convolutions would be a full process representation. Anyway, great visualization!

Ответить

Oh man, I'm so glad someone took a direct approach to this problem, when I was learning I was so confused by all these animations and explanations in 2D, and then seeing resulting tensor shapes got me super confused, where the depth go and where did it appear? Thanks for bringing this video to the world!

Ответить

If all are wrong, then why should i watch this one?

Ответить

A major thing that feels missing to me in the animations is clear textual labeling. It's fine that you label them out loud, and then, also, it would be more accessible for folks with hearing challenges or cognitive challenges. My crit aside, this animation is lovely, and I'm very impressed with what you've done. You've earned yourself a new subscriber :)

Ответить

Oh.

Ответить

Love it. I always thought there were no accurate visualization on the internet too. Good job

Ответить

They are not wrong. They are just displaying a different case than what you are interested in. Maybe they are misplaced in the material you were looking at, but if they were animations for different things, like convolution filters in image processing, they wouldn't be wrong. Have some humility.

Ответить

Thank you for this, recently I tried to explain why the input and output shapes behave the way they do, and what gets combined with what. These animations will make it sooo much easier!!

Ответить

They are not wrong. They are a simplification that helps to understand the concept. As any simplification they are incomplete. But not wrong. It's sad that you use clickbait titles.

Ответить

The example just a concept. I don't agree with this sensational title.

Ответить

"a 2D convolution actually takes in a 3D tensor as input and has a 3D convolution as output", well, it depends right? If you have a single channel/grayscale image then the input is in fact a 2D tensor, and each feature outputs a 2D tensor that is joined with all others in the feature map. So if you have a grayscale image with a single feature, the animations would in fact be correct.

I think the animations are perfectly fine, as they simplify a concept to it's most basic form for easy understanding. But it is true that after you understand the basic concept, a 3D - 3D representation is also nice to understand more common and complex examples.

Disclaimer that I could be wrong as I am by no means an expert, but this is my take from my current understanding of convolutions :)

I really appreciate the effort and is good one, but I would still go with the 2D one this is way too much jittery for me with so many things happening at one and choice of colors.

Ответить

@animatedai How did you learn blender? Which were your sources?

Ответить

I liked the idea but title is too big for this kind of correction

Ответить

Lol, I literally learned this the hard way just about 2 months ago, when the shape for my 2d convolution required 3 parameters, and this made me super confused :,)

Ответить

Is there a way to access your course online? I'm really interested in this subject!

Ответить

Great work, such a animation for grouped convolutiion would be nice too

Ответить

Thank you for putting this out!

Ответить

amazing!

Ответить

The animation is just meant as an abstraction of the spatial convolution operation itself. A spatial CNN layer consists of spatial convolution operations across multiple input and output channels (which is what you are referring to)

Ответить